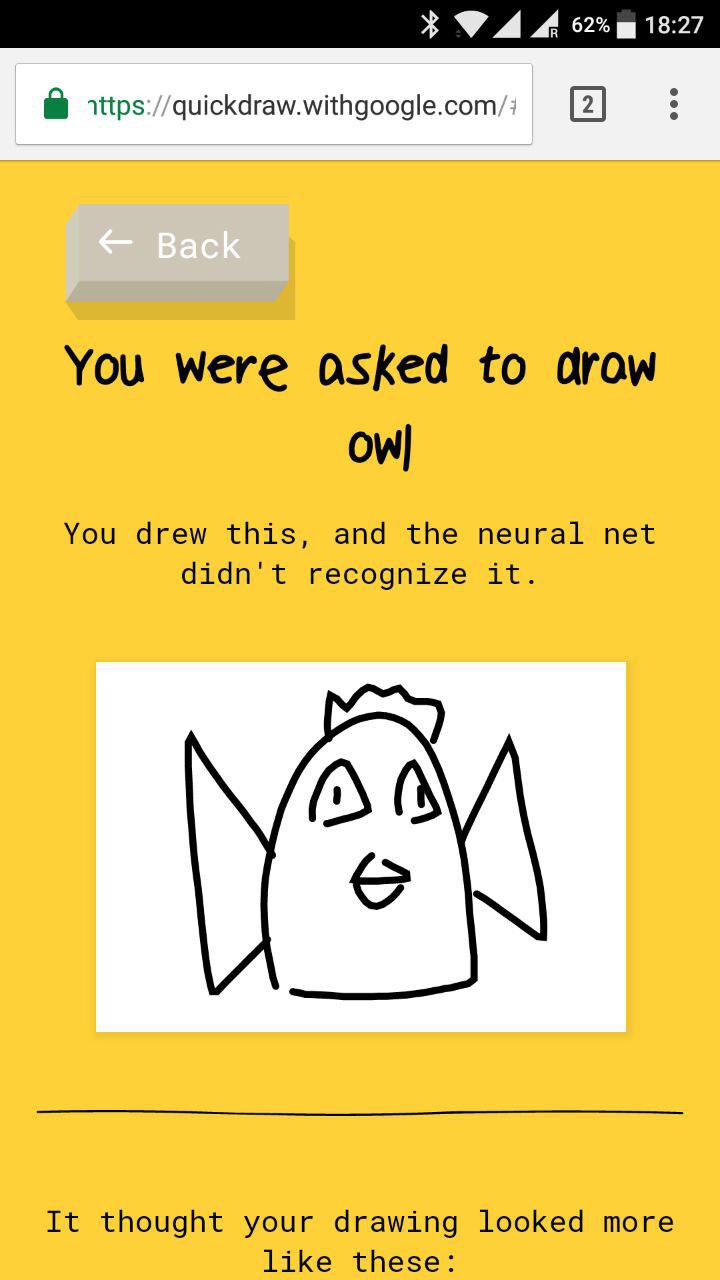

Kind of like writing a sentence with all of the right words, but in the wrong order. So by moving your mouse in a “weird” way, even if the final result is the same, you screw up the recognition. So the input is a representation of the way you moved your mouse rather than the overall view of the image. The model being used is probably a sequence classification model rather than a traditional computer vision model. This may suggest something interesting about the logic being applied. The final drawing was clearly a classic fish sketch, and was very similar to the comparative drawings displayed afterward, but for some reason the computer couldn’t figure out what I was doing. Example - “fish.” I drew the eye, then I went over and drew the tailfin, then I put the mouth/lip shape in front of the eye, then I added the gill line, then the dorsal fin, then the pectoral fin, and then, finally, I added the oval of the body to unify the disconnected bits. The other technique is more mysterious: draw the object piecemeal, in an unusual order. A good example is “camera” - if you draw it from a fairly oblique angle, with the protruding lens pointed away, you can producing something a person would easily recognize, but that the computer can’t process, because nobody else drew it that way. This works because most people don’t do that, so the AI has no comparison point. The first technique is to draw the object in three dimensions. I’m doing the opposite: trying to “elude” the machine intelligence, stumping the computer with a drawing that a human would instantly recognize as the object in question. I learned how to quickly get it to recognize my drawings, if they were previously unsuccessful. That’s going to make me look like a speed demon in comparison to its samples, and probably throw off its calculations. So why would 144 Hz make a difference? Well, it would be sampling the cursor position at 2.4 times the rate. Similar-looking shapes may be drawn differently, depending on the dominant shape present, and it’s probably picking up on that. Die Datenbank ist öffentlich zugänglich und dient der Forschung im Bereich maschinelles Lernen. I believe it’s taking the speed of drawing into account, as well as the ordering of the lines. Quick, Draw Kann ein neuronales Netzwerk Zeichnungen erkennen Du kannst mithelfen, das Netzwerk zu trainieren, indem du deine Zeichnungen der weltweit größten Datenbank für Zeichnungen hinzufügst. So it must be recreating the image from the cursor data instead of simply saving the bitmap. One of the preview-size drawings rendered some lines that I drew, but didn’t show up in the original drawing. Why is that relevant (maybe)? Because I know it’s working from vector data. There’s one significant difference: my home system has a 144 Hz display instead of 60 (my work display, and the most common frequency). But just now I’m at work, having had to do some maintenance, and tried it again–easily 6/6, with most of the guesses coming before I was done.Īt home, I tried two different browsers, so I know it’s not that. I tried again on my home system, and again got 0/6. I think I have a theory as to what’s going on. Maybe that’s a new word, and it’s still learning about it? Well played Google, well played.That looks like a perfectly respectable snail, to me. And I’m quite sure that all the drawings contribute in training of their artificial intelligence system, that maybe they’ll use to detect objects in Google Image. Besides that, the high recognition rate shows how Google software is good, so from a technical standpoint you admire them. This means that this program is having lots of shares on social networks and so from a marketing standpoint this is huge. I think that Quickdraw has been a genius move by Google because it’s very funny to play it and then show your friends your terrible drawings with which the AI magically spotted the correct definition. How did it detect that this is a pillow? It’s incredible! You have only 20 seconds, but usually the system is so smart that it detects what you’re drawing in just some seconds! The impressive thing is that with some traits it sucessfully detects the thing you’re drawing, even if you’re not exactly painting like Van Gogh. The QuickDraw dataset range youve linked to above includes a link to the quickdraw python module that makes it super simple to visualise quickdraw data: from quickdraw import QuickDrawData qd QuickDrawData () anvil qd. Ok Google, I’ll draw a magnificient pillow… hope you’ll guess it

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed